This is just a post to keep my thoughts together, and so that I have a place to review what I did in case (when) I forget.

1) reviewed how to use arcmap's model builder on youtube.

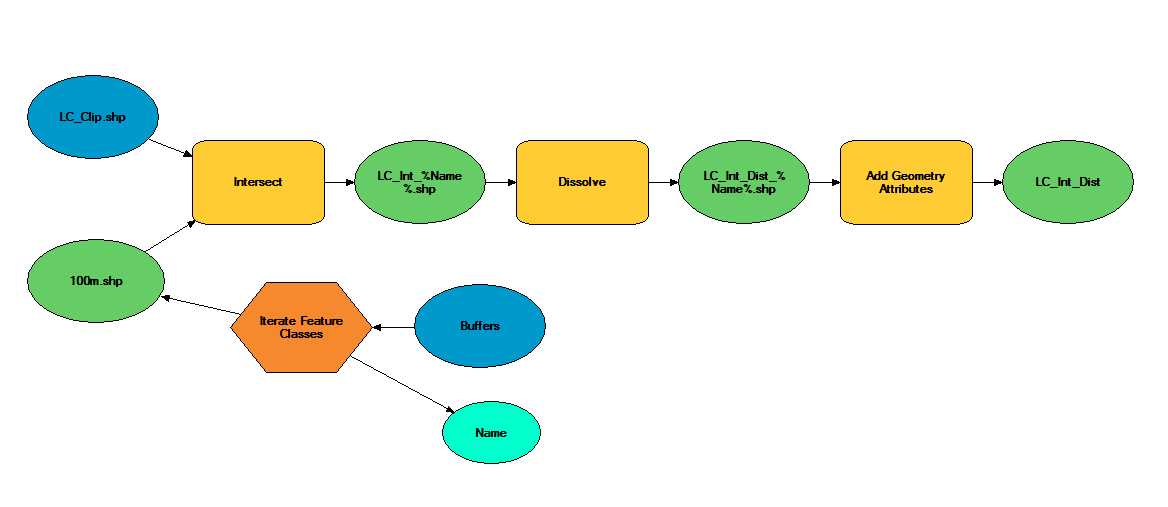

2) created a model in model builder to streamline workflow

3) model doesn't work

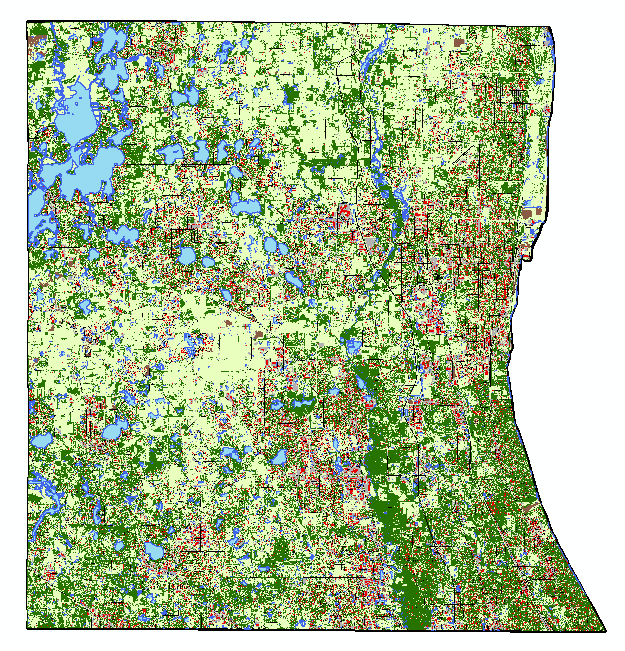

3) realized model isn't working because it's still processing. It was taking forever to run because my 10m resolution landcover shapefile is massive (1.91 gb)

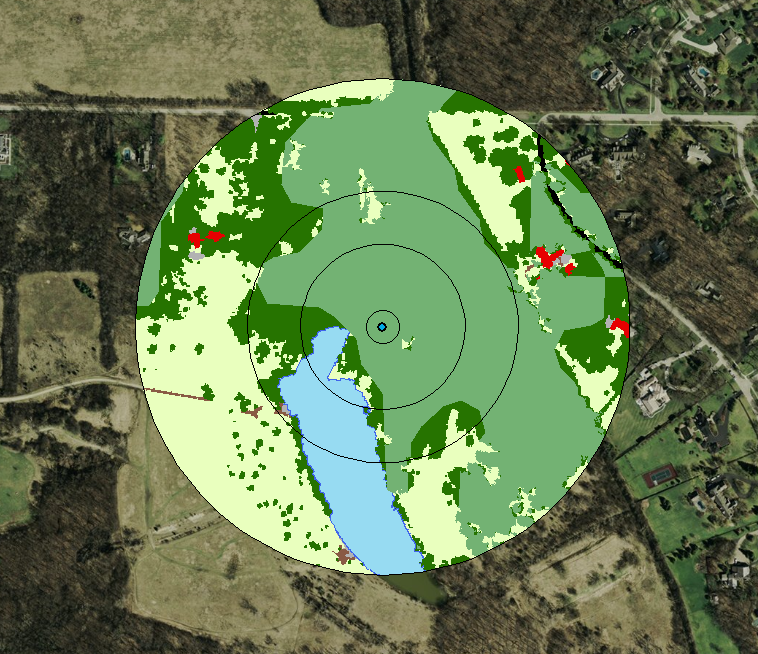

4) decided to clip landcover .shp to the largest extent I need (a 300m buffer around points). This took ~45 minutes to run, but reduced file size to 63 mb, a 97% reduction.

5) reviewed changes suggested by editor for paper submitted to The Kingbird while clipping processed

6) tested model, model worked

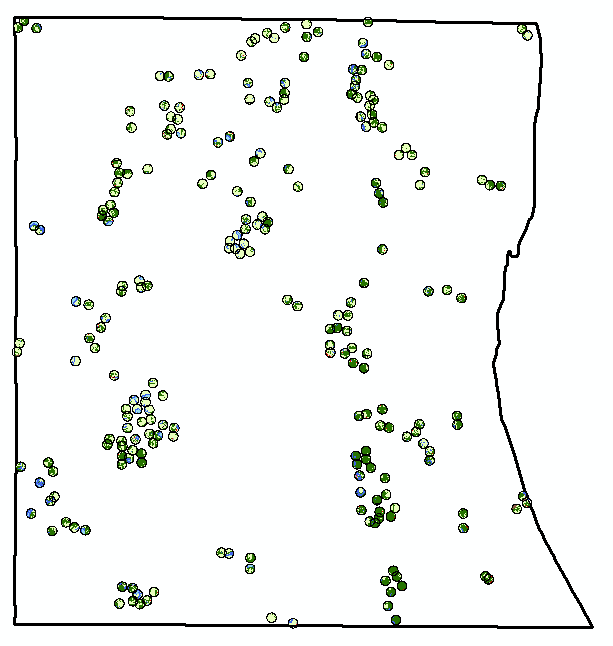

7) added iterative function to model so I can run it once instead of separately for each buffer (20, 100, 164, 300).

8) model successful! Using a model instead of doing everything individually was not only a time saver, but a huge mental energy saver. In the image below, yellow box is a geoprocessing function. That's three per buffer, so after creating the model, I used one click to to 12 separate functions, and about 120 mouse clicks and brain decisions (or things that could be messed up). AUTOMATE!

9) use select by attributes to copy forest cover (gridcode=1) into excel (site and obs covarites.xlsx)

10) use vlookup to fill in column of all potential sites

11) transform into proportion of buffer by dividing by the area of the buffer (a=π*r^2)

12) do this for each of the 4 buffer zones

13) then I dicked around for a bit because I'm weak, but then all the while pondering my next step

14) decided to modify my model for the buckthorn layer I have, which is 5m resolution and county level

15) it two three easy steps and less than a minute to modify my model to use the buckthorn layer as its input, and name the output files accordingly. Taking the time to create this model has now saved me an additional 12 geoprocessing function steps. AUTOMATE!

2) created a model in model builder to streamline workflow

3) model doesn't work

3) realized model isn't working because it's still processing. It was taking forever to run because my 10m resolution landcover shapefile is massive (1.91 gb)

4) decided to clip landcover .shp to the largest extent I need (a 300m buffer around points). This took ~45 minutes to run, but reduced file size to 63 mb, a 97% reduction.

5) reviewed changes suggested by editor for paper submitted to The Kingbird while clipping processed

6) tested model, model worked

7) added iterative function to model so I can run it once instead of separately for each buffer (20, 100, 164, 300).

8) model successful! Using a model instead of doing everything individually was not only a time saver, but a huge mental energy saver. In the image below, yellow box is a geoprocessing function. That's three per buffer, so after creating the model, I used one click to to 12 separate functions, and about 120 mouse clicks and brain decisions (or things that could be messed up). AUTOMATE!

9) use select by attributes to copy forest cover (gridcode=1) into excel (site and obs covarites.xlsx)

10) use vlookup to fill in column of all potential sites

11) transform into proportion of buffer by dividing by the area of the buffer (a=π*r^2)

12) do this for each of the 4 buffer zones

13) then I dicked around for a bit because I'm weak, but then all the while pondering my next step

14) decided to modify my model for the buckthorn layer I have, which is 5m resolution and county level

15) it two three easy steps and less than a minute to modify my model to use the buckthorn layer as its input, and name the output files accordingly. Taking the time to create this model has now saved me an additional 12 geoprocessing function steps. AUTOMATE!